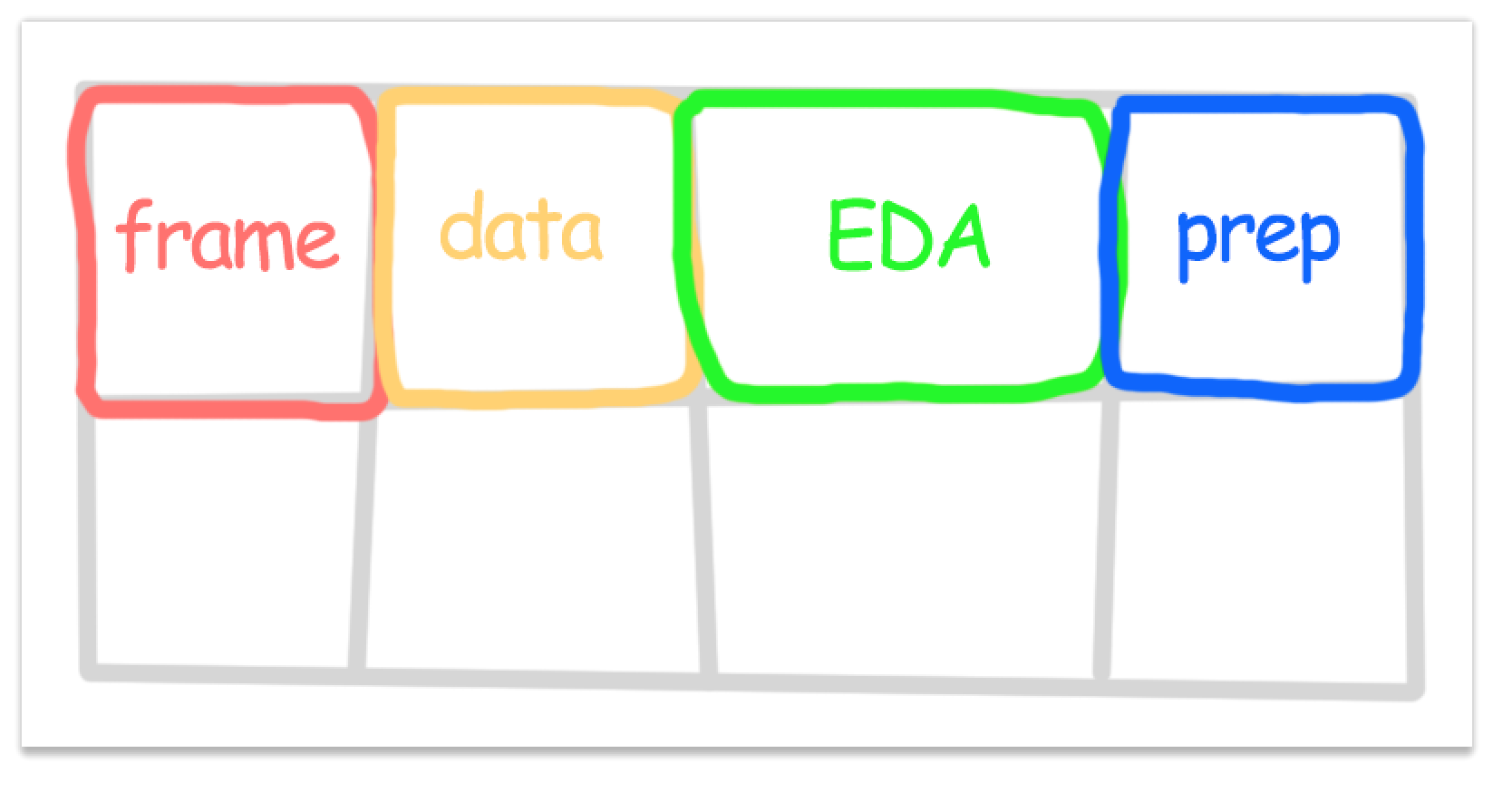

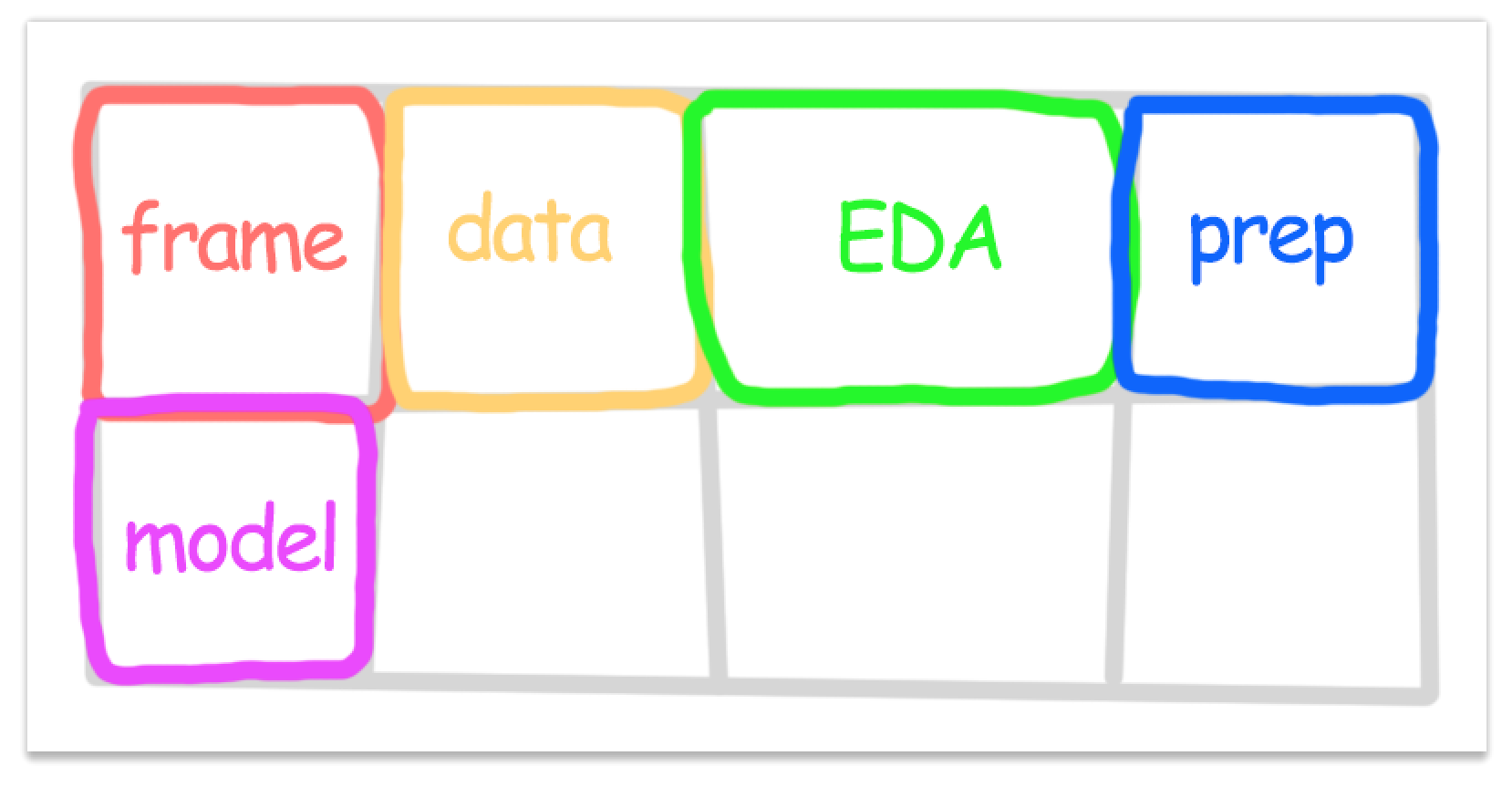

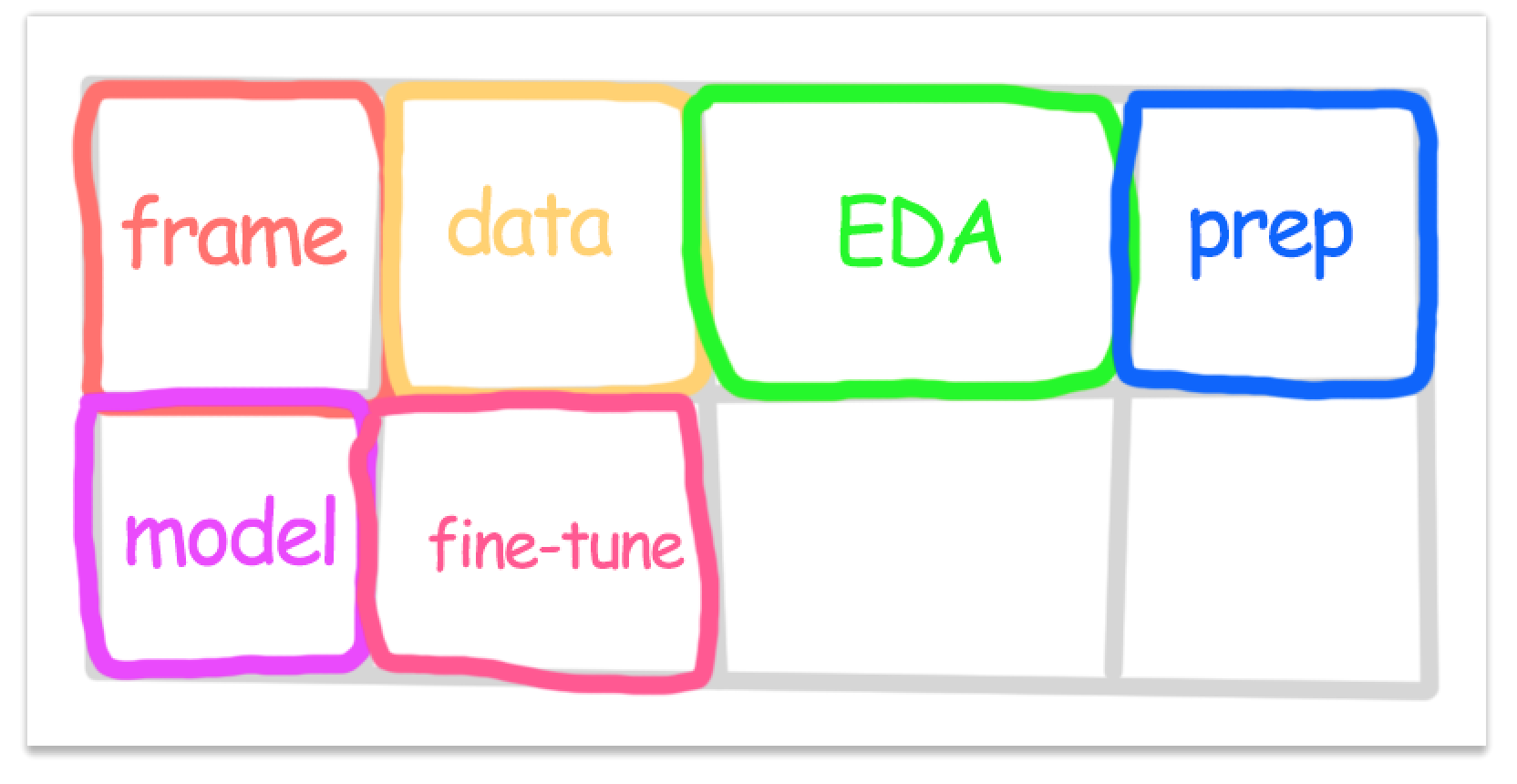

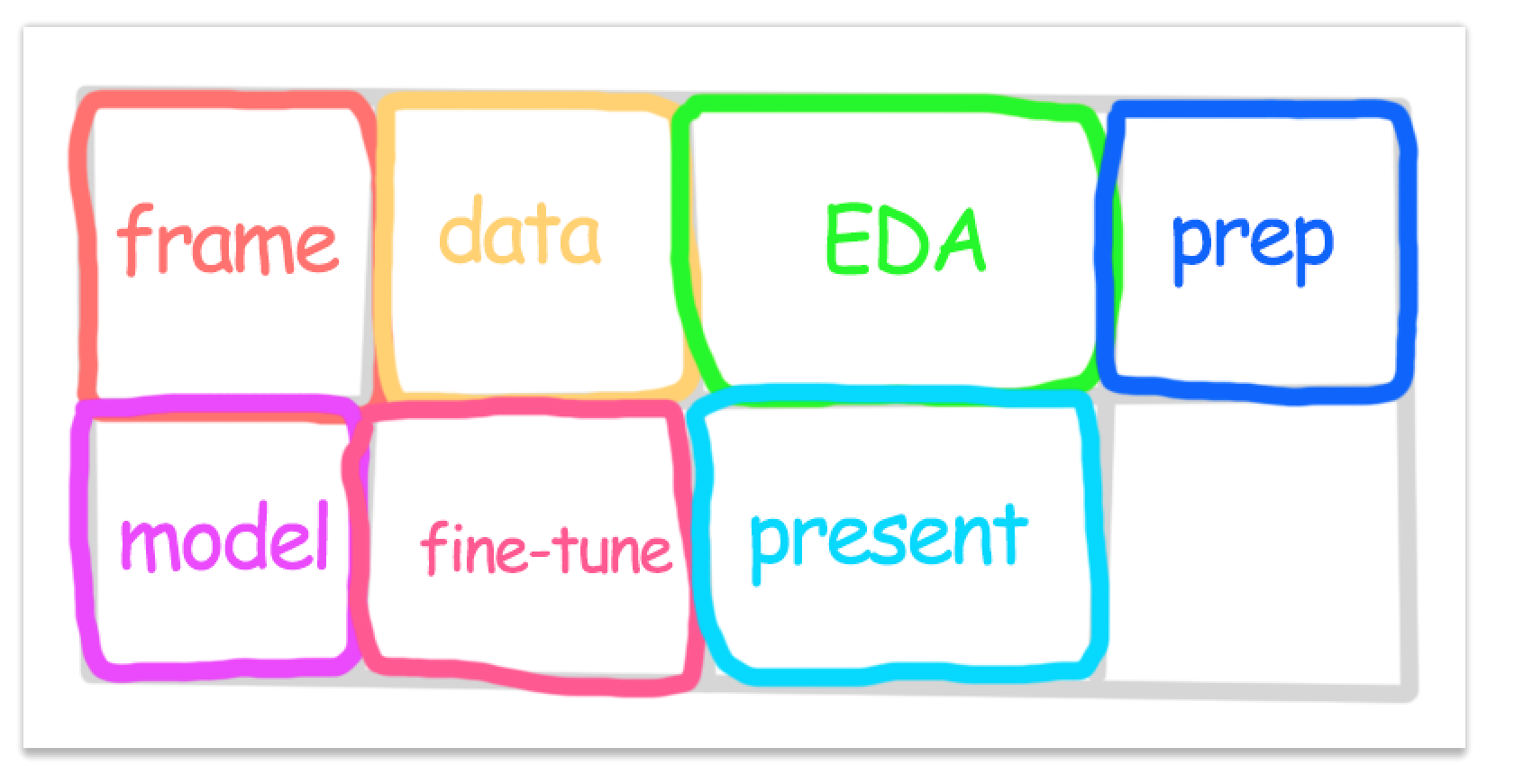

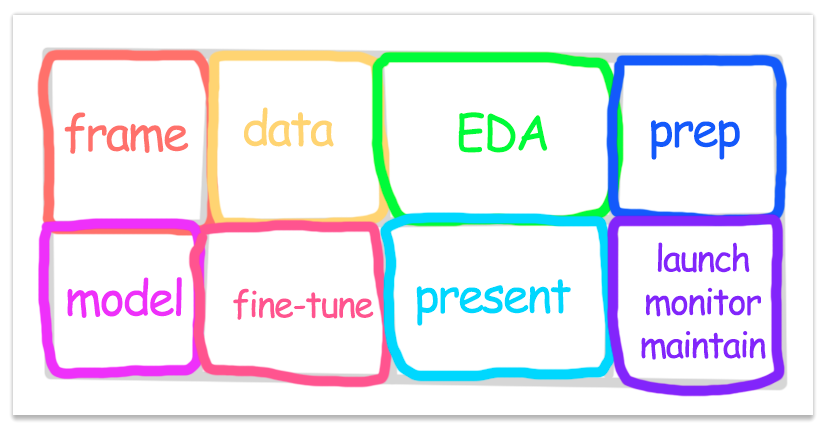

8-Step Machine Learning Checklist

Use this 8-step machine learning checklist to guide you through your machine learning projects.

I found this checklist the appendix of Hands-On Machine Learning with Scikit-Learn and TensorFlow. Every machine learning project should use this checklist, or at least part of it.

The checklist:

- Frame the problem and look at the big picture.

- Get the data.

- Explore the data to gain insights.

- Prepare the data to better expose the underlying data patterns to machine learning algorithms.

- Explore many different models and shortlist the best ones.

- Fine-tune your models and combine them into a great solution.

- Present your solution.

- Launch, monitor, and maintain your system.

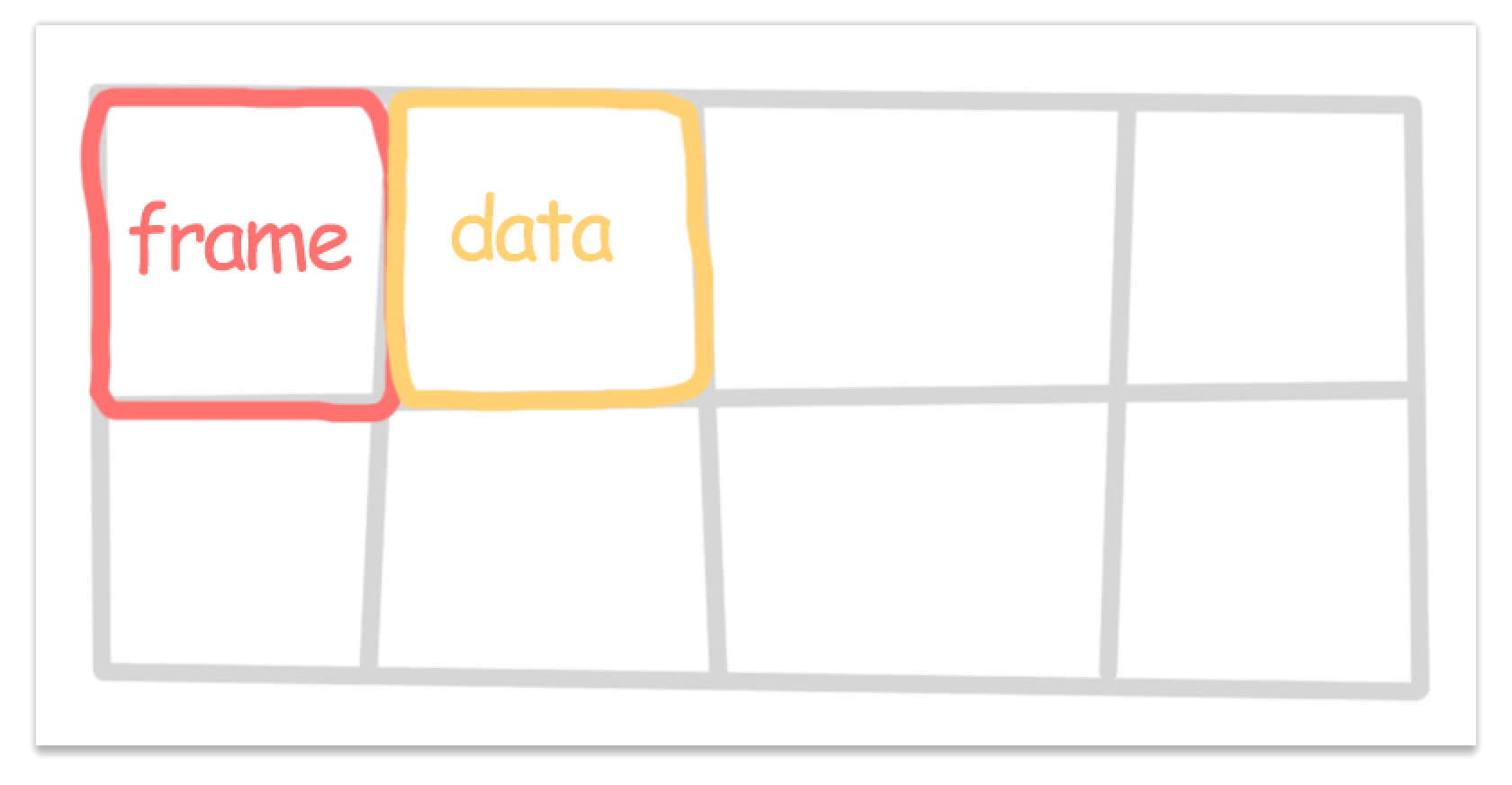

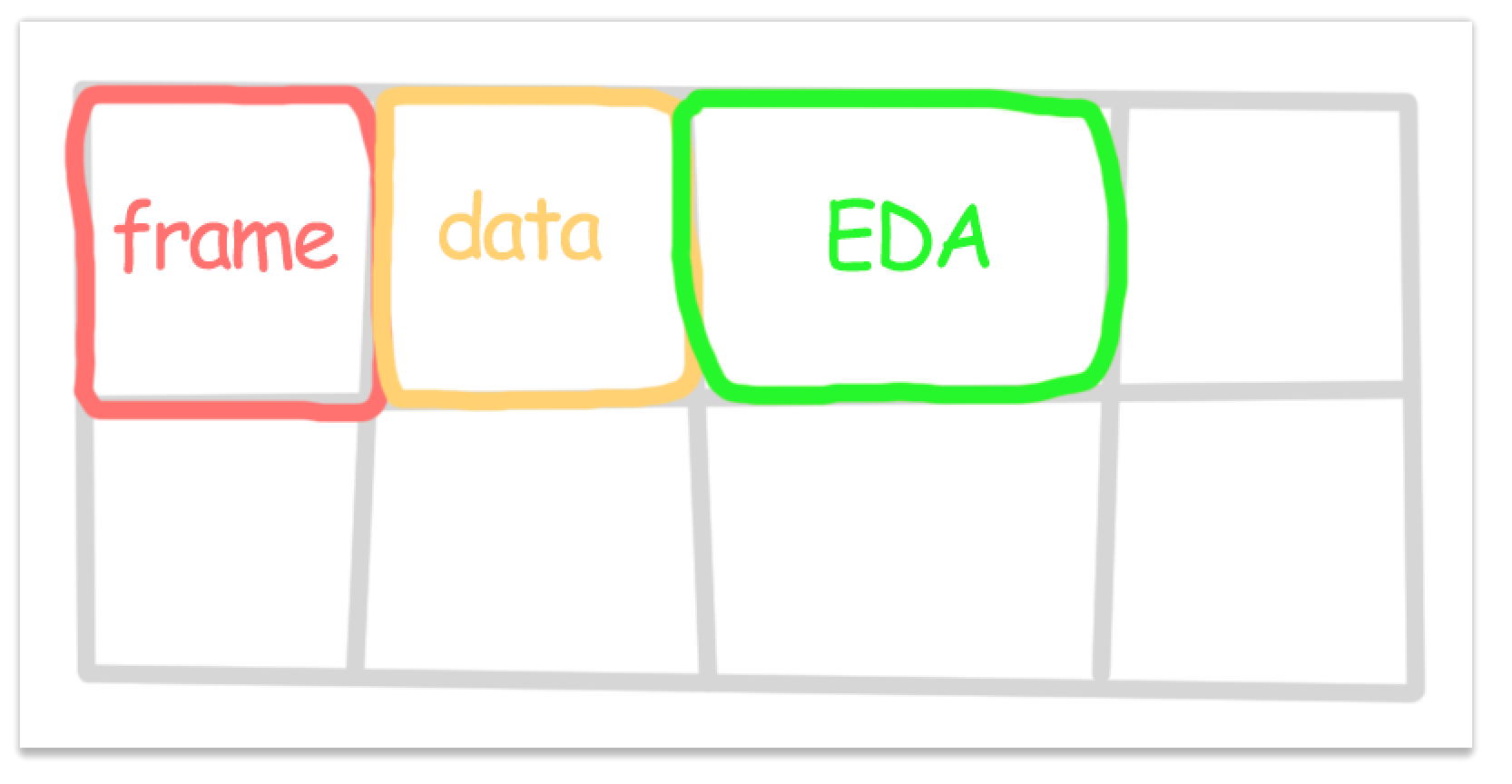

Step 1: Frame the problem and look at the big picture.

In this step you frame the problem and try to look at the big picture. This step answers questions such as: What problem are we solving? What business objective does it solve? Who is going to consume my solution? What is the current solution? At the end of this step you’ll have a solid grasp of the big picture of what you’re solving.

Checklist:

- Define the objective in business terms.

- How will your solution be used?

- What are the current solutions/workarounds (if any)?

- How should you frame this problem (supervised/unsupervised, online/offline, etc.)?

- How should performance be measured?

- Is the performance measure aligned with the business objective?

- What would be the minimum performance needed to reach the business objective?

- What are comparable problems? Can you reuse experience or tools?

- Is human expertise available?

- How would you solve the problem manually?

- List the assumptions you (or others) have made so far.

- Verify assumptions if possible.

Step 2: Get the data

In the second step we fetch the data. Try to automate as much as possible so you always have the freshest data. At the end of this step you will have a raw dataset.

Checklist:

- List the data you need and how much you need.

- Find and document where you can get that data.

- Create a workspace (with enough storage space).

- Get the data.

- Convert the data to a format you can easily manipulate (without changing the data itself).

- Ensure sensitive information is deleted or protected.

- Check the size and type of data (time series, sample, geographical, etc.).

- Sample a test set, put it aside, and never look at it.

Step 3: Explore the data

In the third step do exploratory data analysis (EDA). We explore the data and try to figure out the “health” and “shape” of the data (missing values, distributions, etc.) At the end of this step you’ll have a solid understanding and feel of the dataset.

Checklist:

- Create a copy of the data for exploration (sampling it down to a manageable size if necessary).

- Create a Jupyter notebook to keep a record of your data exploration.

- Study each attribute and its characteristics:

- Name

- Type (categorical, int/float, bounded/unbounded, text, structured, etc.)

- % of missing values

- Noisiness and type of noise (stochastic, outliers, rounding errors, etc.)

- Usefulness for the task

- Type of distribution (Gaussian, uniform, logarithmic, etc.)

- For supervised learning tasks, identify the target attribute(s).

- Visualize the data.

- Study the correlations between attributes.

- Study how you would solve the problem manually.

- Identify the promising transformations you may want to apply.

- Identify extra data that would be useful.

- Document what you have learned.

Step 4: Prepare the data

In this step you’re going to prepare the data. At the end of this step you’ll have a set of functions/transformers that perform data transformations. These transformers turn your raw data into preprocessed cleaned data, ready to be used by machine learning algorithms.

Set yourself up for success by writing functions for your data transformations!

Write functions for all data transformations you apply, for five reasons:

- So you can easily prepare the data the next time you get a fresh dataset

- So you can apply these transformations in future projects

- To clean and prepare the test set

- To clean and prepare new data instances once your solution is live

- To make it easy to treat your preparation choices as hyperparameters

Checklist:

- Data cleaning:

- Fix or remove outliers (optional).

- Fill in missing values (e.g., with zero, mean, median…) or drop their rows (or columns).

- Feature selection (optional):

- Drop the attributes that provide no useful information for the task.

- Feature engineering, where appropriate:

- Discretize continuous features.

- Decompose features (e.g., categorical, date/time, etc.).

- Add promising transformations of features (e.g., log(x), sqrt(x), x2, etc.).

- Aggregate features into promising new features.

- Feature scaling:

- Standardize or normalize features.

Step 5: Explore different models

Checklist:

- Train many quick-and-dirty models from different categories (e.g., linear, naive Bayes, SVM, Random Forest, neural net, etc.) using standard parameters.

- Measure and compare their performance.

- For each model, use N-fold cross-validation and compute the mean and standard deviation of the performance measure on the N folds.

- Analyze the most significant variables for each algorithm.

- Analyze the types of errors the models make.

- What data would a human have used to avoid these errors?

- Perform a quick round of feature selection and engineering.

- Perform one or two more quick iterations of the five previous steps.

- Shortlist the top three to five most promising models, preferring models that make different types of errors.

Step 6: Fine-tune your models and combine them into a great solution.

Don’t tweak your model after measuring the generalization error: you would just start overfitting the test set.

Checklist:

- Fine-tune the hyperparameters using cross-validation:

- Treat your data transformation choices as hyperparameters, especially when you are not sure about them (e.g., if you’re not sure whether to replace missing values with zeros or with the median value, or to just drop the rows).

- Unless there are very few hyperparameter values to explore, prefer random search over grid search. If training is very long, you may prefer a Bayesian optimization approach (e.g., using Gaussian process priors, as described by Jasper Snoek et al.).1

- Try Ensemble methods. Combining your best models will often produce better performance than running them individually.

- Once you are confident about your final model, measure its performance on the test set to estimate the generalization error.

Step 7: Present your solution.

Checklist:

- Document what you have done.

- Create a nice presentation.

- Make sure you highlight the big picture first.

- Explain why your solution achieves the business objective.

- Don’t forget to present interesting points you noticed along the way.

- Describe what worked and what did not.

- List your assumptions and your system’s limitations.

- Ensure your key findings are communicated through beautiful visualizations or easy-to-remember statements (e.g., “the median income is the number-one predictor of housing prices”).

Step 8: Launch, monitor, and maintain your system.

Checklist:

- Get your solution ready for production (plug into production data inputs, write unit tests, etc.).

- Write monitoring code to check your system’s live performance at regular

intervals and trigger alerts when it drops.

- Beware of slow degradation: models tend to “rot” as data evolves.

- Measuring performance may require a human pipeline.

- Also monitor your input’s quality.

- Retrain your models on a regular basis on fresh data (automate as much as possible).

Comments